Lessons from Building a Simple RAG

Over the weekend, I wanted to build a quick project around Retrieval-Augmented Generation (RAG). Instead of going for a generic demo, I tried to ground it in a real use case:

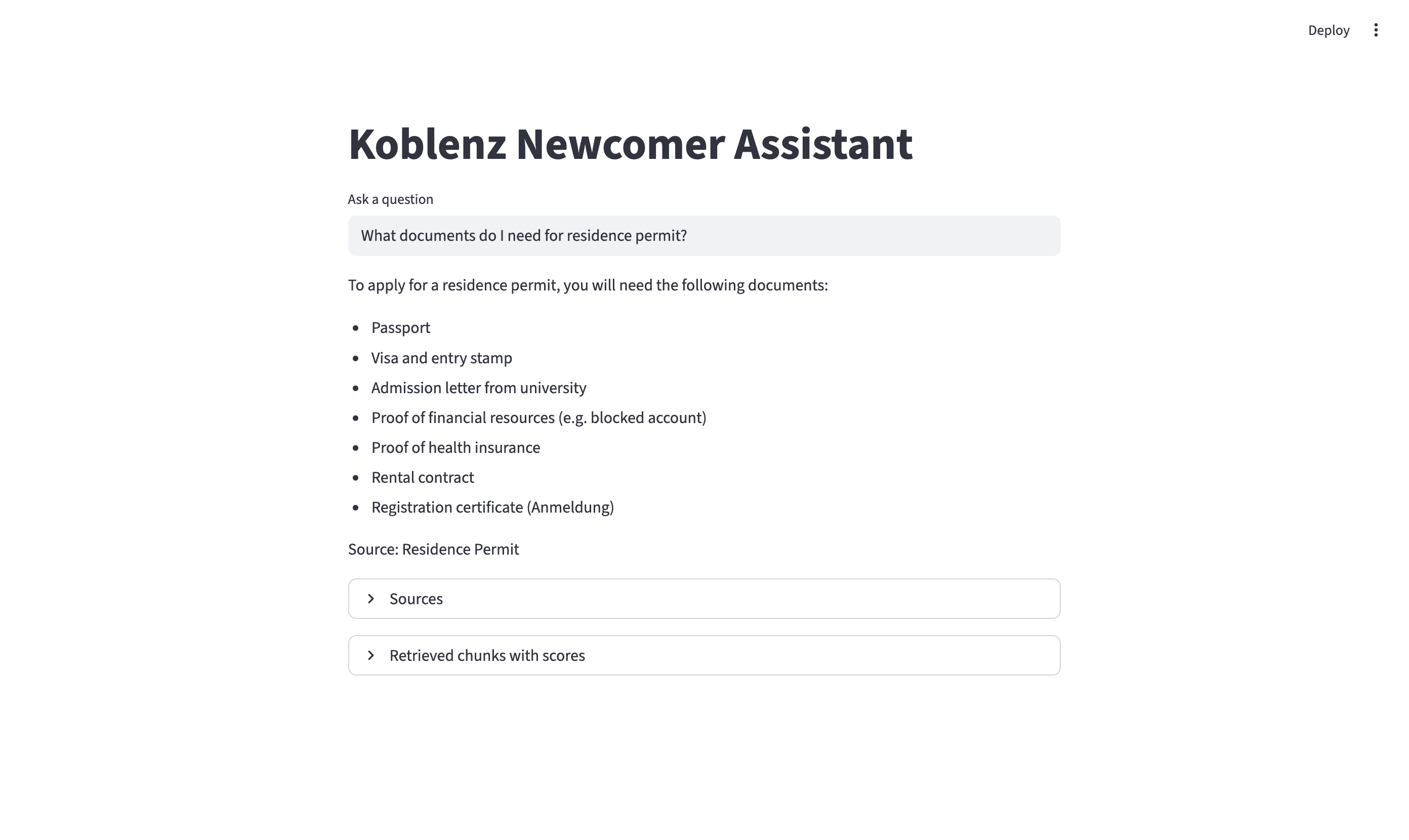

A chatbot to help newcomers especially students in Koblenz to get answers to administrative questions.

The Idea

New students moving to Koblenz (or any other part of Germany for that matter) have many urgent questions like:

- How do I do city registration?

- What documents are needed for a residence permit?

- How do I enroll at my university?

These answers exist across different official websites, but they are:

- scattered

- sometimes hard to navigate

- sometimes in German, which can be a barrier for international students

So I thought:

What if I could build a simple assistant that answers these questions using official information?

Preparing the Data

For the knowledge base, I scraped content from official sources (city, university, student services) into individual text documents:

docs/

├── anmeldung.txt

├── residence_permit.txt

├── enrollment.txt

├── visa.txt

├── student_services.txtEach file contained:

- a clear title

- structured sections (documents, requirements, notes)

- source links

Tech Stack

To quickly prototype the idea, I used Streamlit for the frontend and ChromaDB for vector storage. For embeddings and generation, I used OpenAI's APIs (text-embedding-ada-002 and GPT-4o-mini).

Basic RAG Flow

User Question

↓

Convert to Embedding

↓

Vector Search (ChromaDB)

↓

Retrieve Relevant Documents

↓

Inject Context into Prompt

↓

LLM Generates Answer

Testing

When testing:

What documents do I need for residence permit?

The system returned documents from visa.txt instead of residence_permit.txt.

After some debugging, I found it was due to both documents sharing many common terms like 'passport', 'health insurance' and 'visa'. The similarity scores were therefore very close, and the system couldn't distinguish which one was more relevant.

residence_permit.txt - Score: 0.24015051126480103

visa.txt - Score: 0.2380703091621399

anmeldung.txt - Score: 0.25784754753112793

Lower score = better match, but the differences were too small to clearly separate topics.

As a result, multiple documents were retrieved, context was mixed and the final answer was incorrect.

The Fix: Simple Reranking & More Refined Prompting

Instead of relying purely on vector similarity, I added:

- keyword-based boosting

- if query contains “residence permit” → boost residence_permit.txt

- top-1 selection

- only use the most relevant document

- refined prompting

- stricter grounding and more constrained answering

This significantly improved answer accuracy for the same query:

residence_permit.txt - Adjusted Score: 0.21015051126480103

visa.txt - Adjusted Score: 0.2380703091621399

anmeldung.txt - Adjusted Score: 0.25784754753112793

Lessons

- RAG can be powerful but requires careful tuning of retrieval and ranking to work well.

- Simple keyword-based adjustments and refining the prompt can significantly improve relevance without needing complex models.

- Adding structured metadata (like titles) to documents can help with both retrieval and explainability.

Next Steps

If I continue this project, I would add:

- chat interface for conversational flow

- document upload functionality

- PDF support

- function to scrape websites from a list of URLs and generate .txt documents

- more sources to extend support beyond students

Github repo: https://github.com/evangelistagrace/koblenz-newcomer-assistant